Blocky is my first attempt at grafana and prometheus

for starters this was great:

https://grafana.com/grafana/dashboards/13768-blocky/

this was not great:

https://github.com/0xERR0R/blocky-grafana-prometheus-example

As I've never had a working grafana/prometheus I'm not sure what is a problem and what might be 'self inflicted'

(running void linux (glibc not musl) fwiw)

tail -F /var/log/socklog/everything/current

2022-12-12T18:52:08.87858 daemon.info: Dec 12 13:20:33 grafana: logger=context traceID=00000000000000000000000000000000 userId=0 orgId=0 uname= t=2022-12-12T13:52:08.87-0500 lvl=info msg="Request Completed" method=GET path=/d/JvOqE4gRk/blocky status=302 remote_addr=10.20.250.18 time_ms=0 duration=242.444µs size=29 referer= traceID=00000000000000000000000000000000

2022-12-12T18:52:18.66284 daemon.info: Dec 12 13:20:33 grafana: logger=http.server t=2022-12-12T13:52:18.66-0500 lvl=info msg="Successful Login" User=admin@localhost

2022-12-12T18:52:19.32945 daemon.info: Dec 12 13:20:33 grafana: logger=context traceID=00000000000000000000000000000000 userId=1 orgId=1 uname=admin t=2022-12-12T13:52:19.32-0500 lvl=info msg="Request Completed" method=GET path=/api/live/ws status=0 remote_addr=10.20.250.18 time_ms=2 duration=2.864183ms size=0 referer= traceID=00000000000000000000000000000000

2022-12-12T18:53:01.16633 daemon.info: Dec 12 13:20:33 grafana: logger=tsdb.prometheus t=2022-12-12T13:53:01.16-0500 lvl=eror msg="Range query failed" query="sum(increase(blocky_prefetch_hit_count[300s])) / (sum(increase(blocky_cache_hit_count[300s])))" err="Post \"http://localhost:9090/api/v1/query_range\": context canceled"

2022-12-12T18:53:01.16670 daemon.info: Dec 12 13:20:33 grafana: logger=context traceID=00000000000000000000000000000000 userId=1 orgId=1 uname=admin t=2022-12-12T13:53:01.16-0500 lvl=info msg="Request Completed" method=POST path=/api/ds/query status=400 remote_addr=10.20.250.18 time_ms=4 duration=4.369778ms size=98 referer="http://10.20.0.15:3000/d/JvOqE4gRk/blocky?orgId=1&from=now-5m&to=now&refresh=10s" traceID=00000000000000000000000000000000

2022-12-12T18:53:01.17926 daemon.info: Dec 12 13:20:33 grafana: logger=tsdb.prometheus t=2022-12-12T13:53:01.17-0500 lvl=eror msg="Range query failed" query="sum(blocky_prefetch_domain_name_cache_count)/ sum(up{job=\"blocky\"})" err="Post \"http://localhost:9090/api/v1/query_range\": context canceled"

2022-12-12T18:53:01.17937 daemon.info: Dec 12 13:20:33 grafana: logger=context traceID=00000000000000000000000000000000 userId=1 orgId=1 uname=admin t=2022-12-12T13:53:01.17-0500 lvl=info msg="Request Completed" method=POST path=/api/ds/query status=400 remote_addr=10.20.250.18 time_ms=1 duration=1.254691ms size=98 referer="http://10.20.0.15:3000/d/JvOqE4gRk/blocky?orgId=1&from=now-5m&to=now&refresh=10s" traceID=00000000000000000000000000000000

2022-12-12T18:54:24.32408 daemon.info: Dec 12 13:20:33 grafana: logger=tsdb.prometheus t=2022-12-12T13:54:24.32-0500 lvl=eror msg="Range query failed" query="sum by (client) (rate(blocky_query_total[5m])) * 60" err="Post \"http://localhost:9090/api/v1/query_range\": context canceled"

2022-12-12T18:54:24.32420 daemon.info: Dec 12 13:20:33 grafana: logger=context traceID=00000000000000000000000000000000 userId=1 orgId=1 uname=admin t=2022-12-12T13:54:24.32-0500 lvl=info msg="Request Completed" method=POST path=/api/ds/query status=400 remote_addr=10.20.250.18 time_ms=242 duration=242.716032ms size=98 referer="http://10.20.0.15:3000/d/JvOqE4gRk/blocky?orgId=1&from=now-5m&to=now&refresh=10s" traceID=00000000000000000000000000000000

2022-12-12T18:54:24.41804 daemon.info: Dec 12 13:37:49 prometheus: ts=2022-12-12T18:54:24.417Z caller=api.go:1540 level=error component=web msg="error writing response" bytesWritten=0 err="write tcp 127.0.0.1:9090->127.0.0.1:23280: write: broken pipe"

2022-12-12T20:13:55.65922 daemon.info: Dec 12 13:20:33 grafana: logger=context traceID=00000000000000000000000000000000 userId=1 orgId=1 uname=admin t=2022-12-12T15:13:55.65-0500 lvl=info msg="Request Completed" method=GET path=/api/live/ws status=0 remote_addr=10.20.250.18 time_ms=4 duration=4.738284ms size=0 referer= traceID=00000000000000000000000000000000

250.18 is where I am.. viewing the instance at 0.15. Grafana, Prometheus, and Blocky are all on 0.15

I cannot print out as a pdf the grafana dashboard..

Is there a problem here? or did I do something wrong?

this looks and seems accurate

this also seems accurate and correct

This is a three hour view, aside from the html; the Cache Hit/Miss ratio is that 97.4% hit or miss?

and this happens a lot..

logs:

2022-12-12T20:13:55.65922 daemon.info: Dec 12 13:20:33 grafana: logger=context traceID=00000000000000000000000000000000 userId=1 orgId=1 uname=admin t=2022-12-12T15:13:55.65-0500 lvl=info msg="Request Completed" method=GET path=/api/live/ws status=0 remote_addr=10.20.250.18 time_ms=4 duration=4.738284ms size=0 referer= traceID=00000000000000000000000000000000

2022-12-12T20:18:26.87177 daemon.info: Dec 12 13:20:33 grafana: logger=tsdb.prometheus t=2022-12-12T15:18:26.86-0500 lvl=eror msg="Range query failed" query="sum by (client) (rate(blocky_query_total[5m])) * 60" err="Post \"http://localhost:9090/api/v1/query_range\": context canceled"

2022-12-12T20:18:26.87634 daemon.info: Dec 12 13:20:33 grafana: logger=context traceID=00000000000000000000000000000000 userId=1 orgId=1 uname=admin t=2022-12-12T15:18:26.87-0500 lvl=info msg="Request Completed" method=POST path=/api/ds/query status=400 remote_addr=10.20.250.18 time_ms=84 duration=84.325457ms size=98 referer="http://10.20.0.15:3000/d/JvOqE4gRk/blocky?orgId=1&from=now-5m&to=now&refresh=10s" traceID=00000000000000000000000000000000

2022-12-12T20:21:36.12626 daemon.info: Dec 12 13:20:33 grafana: logger=context traceID=00000000000000000000000000000000 userId=1 orgId=1 uname=admin t=2022-12-12T15:21:36.12-0500 lvl=info msg="Request Completed" method=GET path=/api/live/ws status=0 remote_addr=10.20.250.18 time_ms=1 duration=1.460355ms size=0 referer= traceID=00000000000000000000000000000000

curl -v --stderr - http://127.0.0.1:4000/metrics | grep -c client

3933

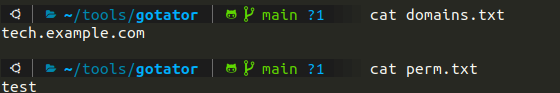

in case this is important

xbps-query -l | grep 'grafana\|prometheus'

ii grafana-8.5.3_1 Open platform for beautiful analytics and monitoring

ii prometheus-2.38.0_1 Monitoring system and time series database

machine is a vm, two cores, 2G ram, xfs, 6.0.9 kernel.

As I said this is the closest I've been to having a working grafana instance, so thank you for that.